Research Article Open Access

Automatic Extraction of 3D Objects from LiDAR Data

Abdelmounaim Bellakaout1*, Cherkaoui Omari Mohammed1, Ettarid Mohamed1 and Touzani Abderrahmane21Hassan II Institute of Agronomy and Veterinary Medicine, Morocco

2Regional African Centre of Space Sciences and Technologies in French Language, Morocco

- *Corresponding Asuthor:

- Bellakaout Abdelmounaim

Hassan II Institute of Agronomy and Veterinary Medicine

Madinat Al Irfane, Rabat, Morocco

Tel: 0661109281

Fax: 0537810978

E-mail: bellakaout_a@yahoo.fr

Received March 17, 2014; Accepted April 07, 2014; Published April 14, 2014

Citation: Bellakaout A, Cherkaoui Omari M, Ettarid M, Touzani A (2014) Automatic Extraction of 3D Objects from LiDAR Data. J Archit Eng Tech 3:123. doi: 10.4172/2168-9717.1000123

Copyright: © 2014 Bellakaout A, et al. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Visit for more related articles at Journal of Architectural Engineering Technology

Abstract

Aerial topographic surveys using Light Detection and Ranging (LiDAR) technology collect dense and accurate information from the surface or terrain, it is becoming one of the important tools in the geosciences for studying earth surface. Classification of LiDAR data for the purpose of extracting ground, vegetation, and buildings is a very important step needed in numerous applications such as 3D city modelling, remote sensing, geographical information system (GIS), mapping, navigation, etc... Regardless of what the scan data will be used for, anautomatic process is greatly required to handle the immense amounts of data collected because the manual process is long and expensive. This paper presents an approach for automatic classification of aerial LiDAR data into 5 groups– buildings, trees, roads, linear object and soil using single return LIDAR and processing the point cloud without generating DEM. Topological relationship and height variation analysis is adopted to segment the entire point cloud preliminarily into upper contour, lower contour, uniform surface, non-uniform surface, linear objects, and the rest. This primary classification is used on the one hand to know the upper and lower of each building in urban scene needed to model façade building and on the second hand to extract point cloud of uniform surface which contain roof, road and ground used in the second phase of classification. The second algorithm is developed to segment the uniform surface into roof building, road and ground, the second phase of classification based on the topological relationship and height variation analysis, The proposed approach has been tested using two areas the first is a housing complex and the second is a primary school. The proposed approach follows in this study proves successful classification results of buildings, vegetation and road classes.

Keywords

LIDAR; Aerial; Segmentation; Urban; Vegetation; Building

Introduction

LASER airborne systems (LIDAR) is a remote sensing technology which integrate a mechanism of direct geo referencing (INS-GPS), measures distance by illuminating a target with a laser and analyzing the reflected light, providing a dense 3D point cloud that faithfully represents the area scanned which requires a careful and powerful treatment.

The interpretation of such LIDAR point cloud requires two steps: segmentation and 3D modeling. We are therefore interested in first time to the automatic segmentation of point cloud.

The automatic classification of urban areas using a LIDAR data or other source such as camera images for example is an important area of research. Most of the demand for these urban models is centered on creation of 3D virtual models of cities. Extract buildings is a key step in this segmentation process, several works have been made to segment buildings in many different ways that we will be summarized next. Processing LIDAR point cloud in an automatic way by special algorithms generates plans in an instant way. The present work deals with the segmentation of 3D data of urban scenes by developing a chain of automatic processes leading to the production of 3D models of urban scenes.

In our studies we present an automatic approach for LIDAR data segmentation, the input data is point cloud, and the output data is point cloud segmented into five classes: buildings, trees, roads, and soil. The methodology adopted in that research is leaning on topological relationship and height variation analysis, preliminarily, we divided on the one hand the point cloud into upper contour, lower contour, uniform surface, non-uniform surface, linear objects, and the rest. In the second hand we divided only the uniform surface into roof and ground point. Finally we divide the ground points into soil and road points.

State of the Art

Segmentation that interests us in our study can be conducted in three approaches:

The first approach is based only on the point cloud, the second one relates to derivatives that is to say the image generated from the raw point and the third brings both.

Approaches based solely on raw point cloud

These approaches only treat the raw point cloud without referring to any derivative of this product, As a non-limiting examples that may be mentioned, segmentation uses the octree structure [1], the algorithm proposed by Kraus and Pfeifer [2] based on linear prediction [3] and area 3D detection [4], Lari et al. [5] propose an algorithm that organizes the point cloud in a tree (kd-tree) subsequently the result of this treatment is filtered [5-7] to use two waveform processing methods, non-linear least squares and a marked point process approach [7], Höfle et al. [8] combine both raster- and point cloud-based methods to extract only vegetation, Reitberger et al. [9] use the normalized cut segmentation to segment single trees in 3D from airborne LIDAR data.

The advantage of these approaches is the conservation of the original characteristics of the point cloud (accuracy, location, topographic relationship...) and using the first echo is reliably. But the most inconvenient of these processes is the requirement of a relatively large memory which is the major drawback of them, and the notion that the urban scene is composed only by trees and buildings which is not a general case.

Approaches inspired of the image processing

In these approaches the treatment is essentially based on an image produced by the interpolation and/or segmentation. In this case, the segmentation means generating objects consisting of similar pixels. In these approaches, techniques of digital image processing are used, for example, methods based on maximum likelihood [10,11], the Bayesian network [12] Surface-Growing Approach [13], the Fourier transform [14] and the distribution analysis [15], Carlberg use 3D shape analysis and region growing to identify “planar” which correspond to ground/ roof and “scatter” regions which correspond to trees [16].

The advantages of these approaches are: the use of known and established algorithms in the field of digital photogrammetry and remote sensing, they are available through software and/or the open source without neglecting the speed of processing and computing. However, the major drawback is the loss of information caused by the resampling step.

Approaches based on the combination of them and point cloud

LiDAR data alone are not sufficient, according to some researchers, we need to combine it with other data sources. Cheng in their studies combines the topographic map and LiDAR data [17]. Habib et al. [18] propose the combination of image and LiDAR data to extract the contours of buildings [18,19].

Our work position

As presented in our studies of state of the art, we found two principal approaches, the first uses only the point cloud which conserves the original characteristics of them but required memory ,time, and gives us only one or two layer data such as building or building and vegetation or only treats the vegetal information. The second groups of approaches classify the LiDAR data by using remote sensing methods which are fast and require less memory than the first approaches but the most important inconvenient is the loss of characteristics of point cloud so loss of the precision.

Our processes use the LiDAR data without any interpolation, and to reduce the processing time we use masks in the form of DEM just to treat one step in the process, after that we superimpose the point cloud to DEM and get the information desired, after that we clean the DEM and we conserve just the point cloud. So, our method use the LiDAR point cloud such an input data and gives as output data in the form of point cloud; the principal advantages of our process is the conservation of original characteristics of point cloud without any transformation and the use of remote sensing methods to filter our data and to reduce the processing time, the second novelty in our method is the extraction of the different types of information such as building, soil, roof, road, vegetation and linear object.

Input Data

To test our algorithm we used LiDAR data free downloaded from (http://www.opentopography.org) website. These data are surveyed by Leica ALS50 LIDAR”Phase II” with an acquisition ≥ 83,000 and <105,900 pulses per second is (83 to 105.9 kHz) and flown between 900 and 1300 m above ground level with a scanning angle of ± 14° from nadir. These parameters were developed to obtain point cloud with an average density greater than 8 pulses per square meter of the land surface, and vertical accuracy estimated at 3.5 cm.

Segmentation Process Developed in this Study

Algorithm developed in this research allows the automatic segmentation of LIDAR point cloud in order to extract buildings, linear objects, vegetation, soil and road. The data used are the 3D coordinates (X,Y,Z) of the first echo only.

The first step of this segmentation method creates immediately five classes, we list as follows:

• Upper contour

• Lower Contour

• Uniform surface

• Non-uniform surface

• Linear object

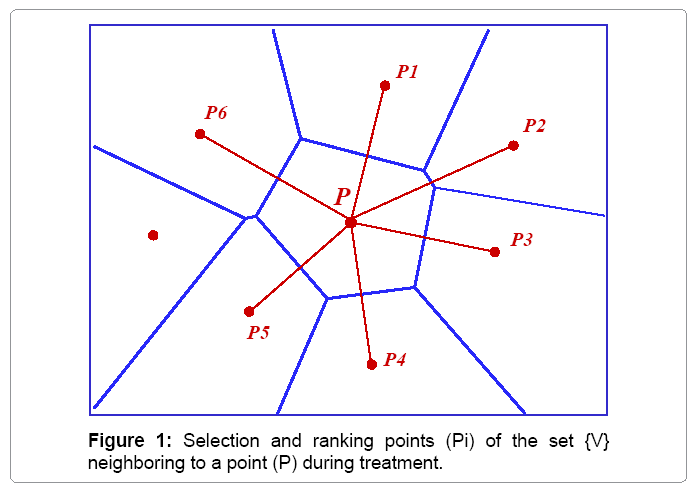

The algorithm uses the Voronoi diagram to select the set of points V={Pi} The closest to a given point (P) than any other point in the point cloud. Thereafter, we take all points of the set V and rank them in order of deposit growth (Figure 1).

The local study of each point by comparing successively their altitude versus its neighbors in order highlight the class to which the point belong by results analysis. The classification mechanism (Algorithm 1) is, for each point (P) of the cloud, to compare the elevations difference between LASER points (np) contained in a neighborhood V and (P) to an empirical threshold S1 chosen according to the desired small 3D element.

This analysis leads automatically to three cases:

Extraction of linear objects

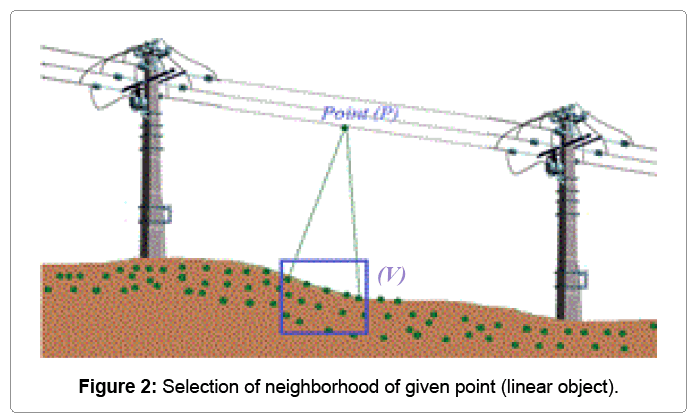

If all the points of the set V have a difference upper than S1, in this case the treaty point belongs to the linear object class, as explained in the following diagram:

• Let V={v1, v2, ...,vn} the set of neighboring points.

• ΔZi = Zp-Zvi, with p the Treaty point and vi the neighboring point to pi.

• If ΔZi> threshold (S1) mi=1 else mi=0.

• M=Σ(mi)

For a linear object, M must be equal to n (Figure 2).

Extraction of uniform and non-uniform surfaces

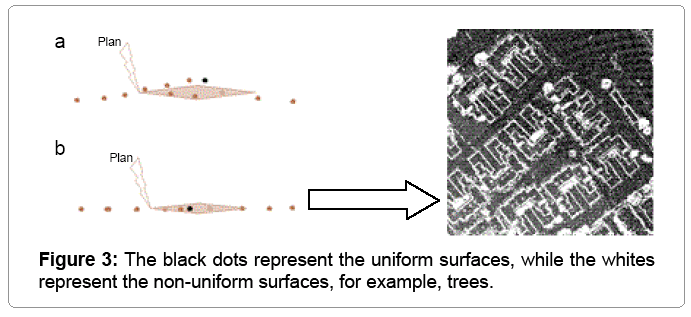

If all the points of the V set have a difference between the thresholds S1 and -S1, in this case the treaty point belongs either to the uniform or non-uniform surface, so two cases arise depending on the accuracy of the point cloud and on the threshold tolerated for this segmentation.

Cases where M=Σ (mi)=0.

At first, if all gradients (ΔZi<S1) are lower than the chosen threshold for segmentation, we move to a second treatment which consists in separating these points into two classes uniform and nonuniform surface.

For this treatment, we calculate the equation of the plane based on the points of the set V; this equation is given by the following formula:

ax+ay+az=0.

Thereafter, the distance (d) between the Treaty Point (p) and the plane (P) is calculated, based on the analysis of result, the point cloud is segmented into uniform and non-uniform surface (Figure 3).

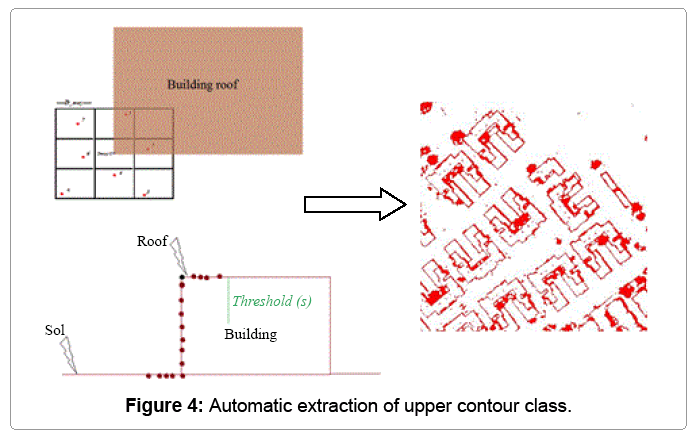

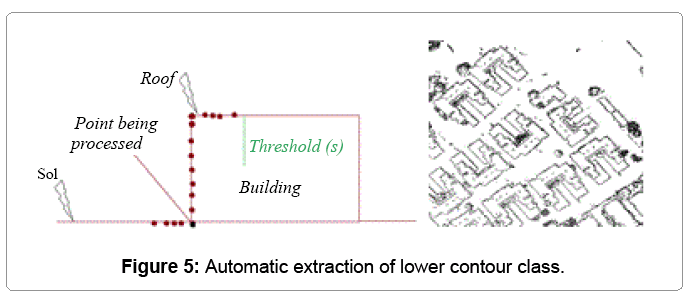

Contour extraction

We have seen two cases, the first in which all differences ΔZi are above the threshold this is the case of linear objects, the second which all ΔZi are inside the interval {s, - s} is the case of uniform and nonuniform surface. The third case mentioned to explain it is where some of ΔZi is greater than s, while other part is inside the interval {s, -s}. In this case, we are faced at points of the upper contour of the building (Figure 4). Another type of information to be extracted from LIDAR data, which is important in 3D modeling, is the information layer of the lower contour of 3D elements. These points are extracted in the case where a part is less than (-s) and the other part is inside the interval {s, -s} as shown in figure 5.

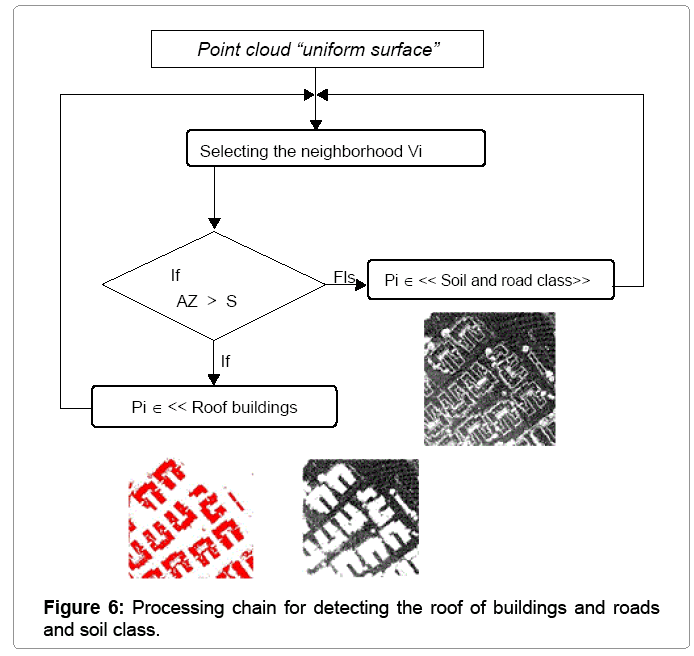

Extraction of roof class

After the detection of the aforementioned five classes, we need to extract the roof buildings class; it must be extracted from the uniform surface class which additionally contains the roofs of buildings, land, roads and other types of information such as, for example, vehicles. The second algorithm developed for this extraction is based in part on the principle of Algorithm 1. Indeed, this segmentation is a series of upper contours extracting of the uniform surface layer until the number of contour points in the uniform surface layer is equal to 0 (Figure 6). In the first time we applier the algorithm N°1 to uniform surface but in this case just to extract upper contour which is stocked to “roof building” class and removed from the uniform surface class, we repeated that operation until the number of uniform class points equal to 0.

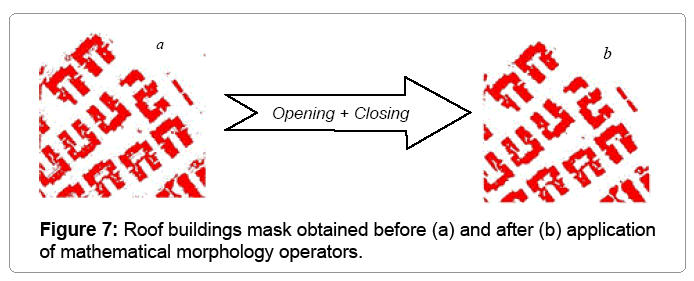

Filtering segmented data

To filter the results of the segmentation, it is appropriate to use masks as DEM image of each extracted classes. Thereafter, the superposition of point cloud on the filters (treated DEM of each class) allows the elimination of noise. At first, we begin by processing the mask from building class by applying mathematical morphology which is divided into two stages: elimination of residual segments and then fill holes in the segments body. This is given by the succession of two operators: the opening used to remove small segments, and the closing used to fill holes in the ground surface segments (Table 1).

| Site | Date of Survey | Site Area | average density | Photo Site |

|---|---|---|---|---|

| (Site 1) �?«Wesrborough�?» South San Francisco United State | 21/03/2007 | 3.52 Ha | 4.5 pts/m2 with 158437 points |  |

| (Site 2) August school Newark Fremont United State | 04/17/2007 | 3.96 Ha | 5 pts/m2 with 196526 points |  |

Table 1: Photo Sites.

In the first stage which is the elimination of residual segments, we can found some small gaps in roof building caused by surveying lack which are automatically accentuated in that stage, so we applied the closing to fill holes in the roof surface segments and after that we superpose the original point cloud to the roof surface mask to extract all roof points. That’s the particularity in our algorithm (Figure 7).

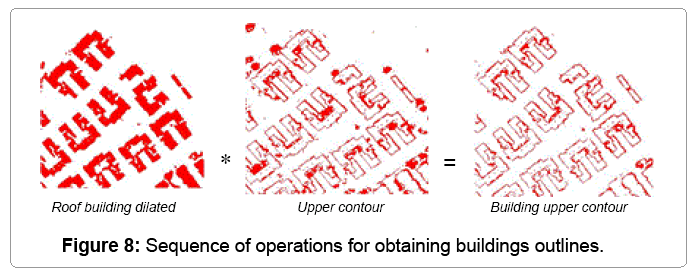

Thereafter, a dilatation are applied to results and multiplied by the upper contour mask to obtain the points of buildings contour (Figure 8).

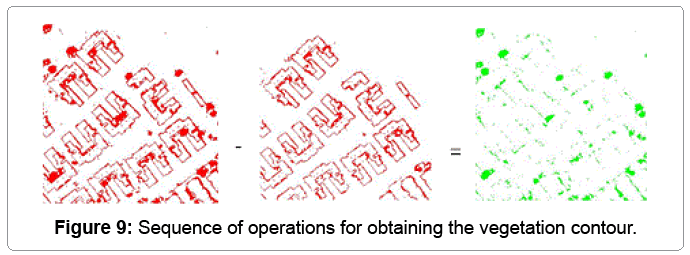

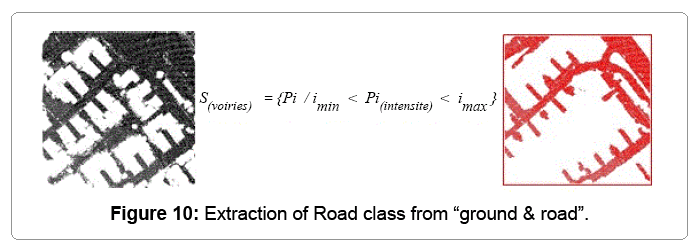

Vegetation class is obtained by subtracting the upper Contour from upper Contour building. Finally the road class is obtained by separating ground and roads depending on the intensity (Figure 9). LiDAR points that belong to the road class are those who have intensity between the acceptable limits for road materials (bitumen) (Figure 10).

Results and Discussion

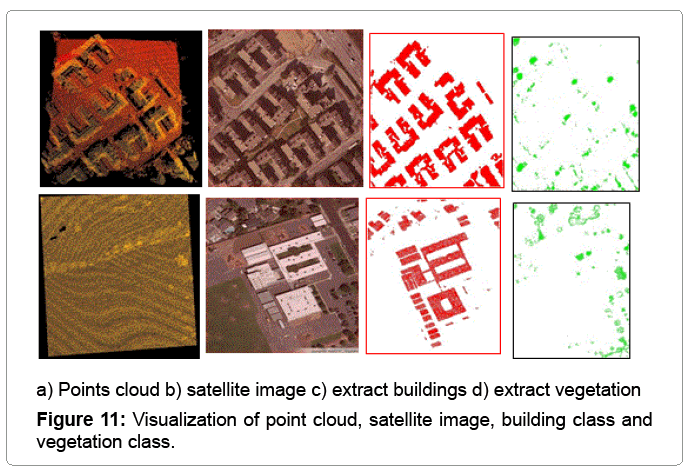

To test our approach, we apply over two different sites, the first is a housing complex and the second is a primary school, as we have satellite images of the two sites. The time required to extract the different classes of the first site and second site covering 4ha of surface equal to 2 min 26 second making this method faster than other methods. Figure 11 shows the extracted buildings on sites, satellite images and visualization of the point cloud. Table 2 shows extracting buildings error.

| Site | Number of buildings | Number of detected buildings | Number of non detected buildings | Surface of small element detected | Detection error |

|---|---|---|---|---|---|

| Site N°1 | 38 | 38 | 0 | 2.25 m2 | 0% |

| Site N°2 | 26 | 26+12 (old picture from the LIDAR survey) | 0 | 2.00 m2 | 0% |

Table 2: Building extraction errors on both sites.

| Site | Number of tree | Number of detected tree | Number of non detected tree | Surface of small tree detected | Detection error |

|---|---|---|---|---|---|

| Site N°1 | 17 | 17 | 0 | 2.25m2 | 0% |

| Site N°2 | 23 | 23 | 0 | 2.00m2 | 0% |

Table 3: Tree extraction errors on both sites.

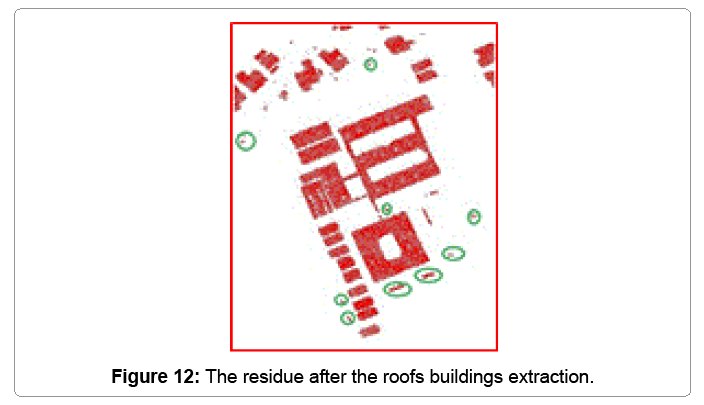

The average density of Site N°1 is (4.5 pts/m2), the segmentation threshold fixed to 2 m, and the noise element is fixed to (3*3) pixel knowing that surface of pixel in soil equal to 0.25 m2. So surface of the smallest building or tree which can be detected in this segmentation is 2.25 m2. As shown in figure 11, it is noted that trees bonded to the buildings are extracted even if the minimal distance between both. We note that all the buildings have been extracted without exception, generally the surface of building in urban scene exceeds 50 m2, and in our segmentation method all 3D elements which are a surface greater than 2.25 m2 will be extracted as shown in figure 12.

These residuals represent a layer of information completely different from building class is a class of moving objects “vehicles”, which requires further processing to filter these results or just augment the segmentation threshold. For this analysis, this approach is effective in terms of 3D objects extraction regardless their natures, and the distinction between vegetation and buildings is a very reliable if they are joined to each other, however, it is necessary to extract the moving objects class from buildings class.

Conclusions

This paper has highlighted a new process in the field of automatic extraction of 3D objects from LIDAR data which is composed of three algorithms using only the first echo and taking advantage of the topology of the point cloud. This process produces a set of data layers as point cloud, which conserves the original precision of the cloud without any interpolation of data. The extraction of 3D objects, through this process is carried out regardless of the terrain. However, it should be improved, because in areas where vegetation is important, 3D objects can be overlapped with the vegetation class.

Summary

Topographical technology by LIDAR (Light Detection and Ranging) or “lasergrammetry” Airborne generate a cloud with a density of several points per square meter and a fairly important point, the processing of such data is a crucial and necessary step to make used. Segmentation is the first step in processing LIDAR data, this article describes a new segmentation process consists of three algorithms. All algorithms directly address the cloud and score points without interpolation, which retains the accuracy and quality of data removed, the treatment focuses on the study of the topological relationship between each point of the cloud with its neighbors at a distance given. Based on the analysis of topological relationships of each point (P) of the cloud {S}, the first algorithm generates five classes: Surface nonuniform upper Contour lower, uniform surface, and the linear object class, knowing that these classes are in the form of point cloud.

The second algorithm is proposed to highlight two important classes in our study: the class that relates to the roofs of buildings and land and road class, while addressing the class uniform surface. And the third algorithm is developed whose function is the separation of the classes road and ground, and the deduction of the vegetation class.

References

- Wang M,Tseng YH (2004) LIDAR data segmentation and classification based on octreestructure. International Archives of Photogrammetry and Remote Sensing 35: 1-6.

- Kraus K, Pfeifer N (1998) Determination ofterrain models in wooded areas with airborne laser Scanner data. ISPRS Journalof Photogrammetry and Remote Sensing 53: 193-203.

- Kraus K, Mikhail EM (1972) Linearleast squares interpolation Photogramm. Eng 38: 1016-1029.

- Lee I, Schenk T (2002) Perceptual organizationof 3D surface points. ISPRS Commission III, WG V/3.

- LariZ, Habib A, Kwak E(2011)An adaptive approach for segmentation of 3D laser pointclouds. ISPRS Workshop, Calgary, Canada.

- Lari Z, Habib A(2012) Segmentation-basedclassification of laser scanning data. ASPRS Annual Conference, Sacramento,California.

- Mallet C, Bretar F, Roux M, Soergel U, HeipkeC (2011) Relevance assessment of full-waveform lidar data for urban areaclassification. ISPRS Journal of Photogrammetry and Remote Sensing 66: S71-S84.

- Höfle B, Hollaus M, Hagenauer J (2012)Urbanvegetation detection using radiometrically calibrated small-footprintfull-waveform airborne LiDAR data. ISPRS Journal of Photogrammetry and RemoteSensing67: 134-147.

- Reitberger J, Krzystek P, Stilla U (2008)Analysis of full waveform LIDAR data for the classification of deciduous andconiferous trees. International journal of Remote Sensing 29: 1407-1431.

- Maas HG (1999)The potential of height texturemeasures for the segmentation of airborne laserscanner data. FourthInternational airborne remote sensing conference and exhibition / 21stCanadianSymposium on Remote Sensing, Ottawa, Canada.

- Tóvári D, Vögtle T (2004) Classificationmethods for 3D objects in laserscanning data. ISPRS Commission VI, III/4.

- Brunn A, Weidner U (1997) Extracting buildingsfrom digital surface models. IAPRS 32: 27-34.

- Pu Shi, Vosselman G(2006) Automatic extractionof building features from terrestrial laser scanning.ISPRS Commission VI.

- Marmol U, Jachimski J (2004) A FFT basedmethod of filtering airborne laser scanner data. Commission III, WG III/3.

- Wang M, Tseng YH (2010) Incrementalsegmentation of LIDAR pointclouds with an octree-structured voxelspace. ThePhotogrammetric Record 26: 32-57.

- Carlberg M (2009) Classifying urban landscapein aerial LiDAR using 3D shape analysis.ICIP-16th IEEE InternationalConference, Cairo, Egypt: 1701-1704.

- Cheng L, Gong J, Chen X, Han P (2008) Buildingboundary extraction from high resolution imagery and LIDAR data. InternationalArchives of the Photogrammetry, Remote Sensing and Spatial Information Sciences37: 693- 698.

- Habib AF, Zhai RF, Kim CJ (2010) Generation ofComplex Polyhedral Building Models by Integrating Stereo- Aerial Imagery andLIDAR Data. Photogrammetric engineering and remote sensing 76: 609-623.

- Cheng L, Gong JY, Li MC, Liu YX (2011) 3DBuilding Model Reconstruction from Multi-view Aerial Imagery and LIDAR Data.Photogrammetric Engineering and Remote Sensing 77: 125-139.

Relevant Topics

- Architect

- Architectural Drawing

- Architectural Engineering

- Building design

- Building Information Modeling (BIM)

- Concrete

- Construction

- Construction Engineering

- Construction Estimating Software

- Engineering Drawing

- Fabric Formwork

- Interior Design

- Interior Designing

- Landscape Architecture

- Smart Buildings

- Sociology of Architecture

- Structural Analysis

- Sustainable Design

- Urban Design

- Urban Planner

Recommended Journals

Article Tools

Article Usage

- Total views: 16901

- [From(publication date):

June-2014 - Aug 29, 2025] - Breakdown by view type

- HTML page views : 12152

- PDF downloads : 4749